For decades, SEO was simple: rank for keywords, get clicks, and drive traffic. But the landscape has shifted. With the rise of Large Language Models (LLMs) like GPT-4, Claude, and Gemini, and the emergence of “Answer Engines” like Perplexity and SearchGPT, the rules of the game have changed.

Today, we aren’t just optimizing for a list of blue links; we are optimizing for Generative Engine Optimization (GEO).

The Shift: From Clicks to Answers

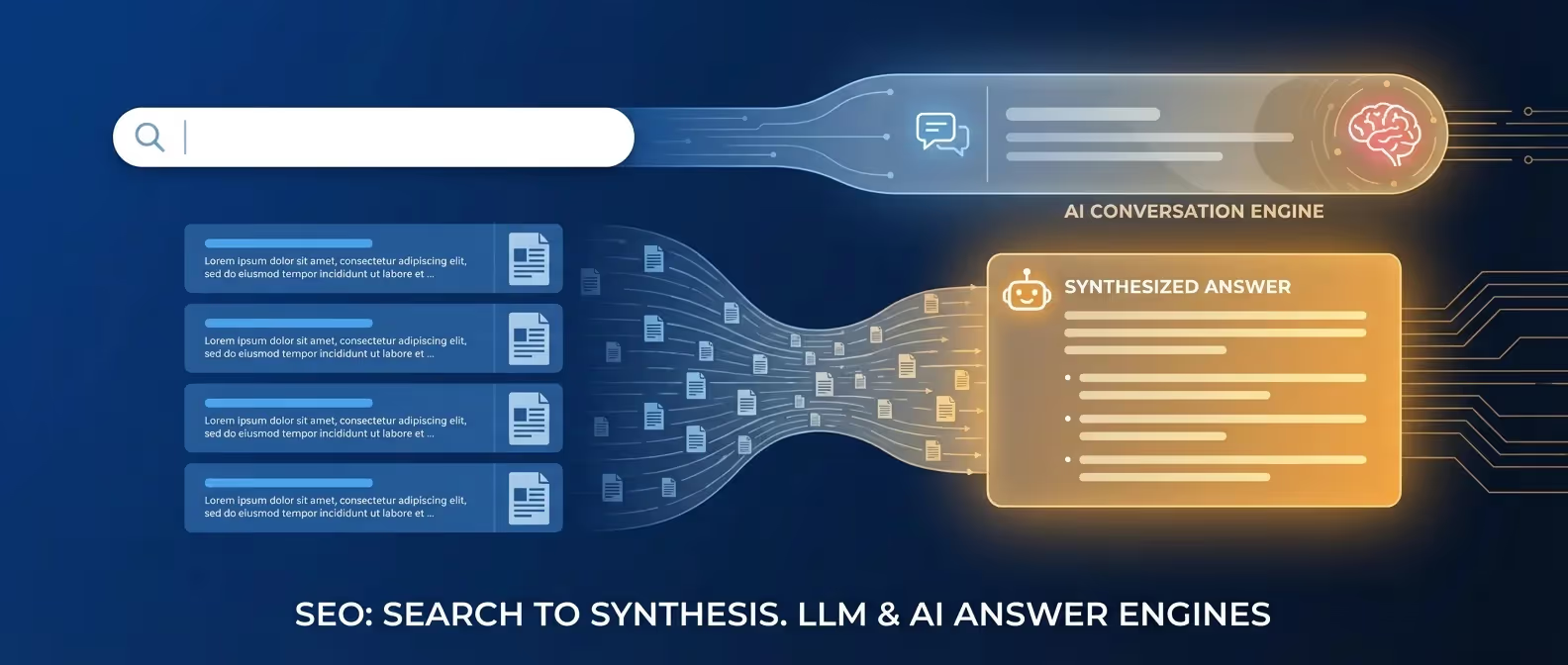

Traditional search engines act as a directory. You ask a question, and they give you a list of potential sources. LLMs and AI search engines, however, provide the answer directly.

If an AI summarizes your content and gives the user exactly what they need, will they still click through to your site? In many cases, the answer is no. This “Zero-Click Search” reality means our goal is no longer just traffic — it’s influence, citations, and brand authority.

A 2024 study by SparkToro found that nearly 60% of Google searches end without a click. With AI Overviews now appearing on a significant percentage of commercial and informational queries, that zero-click rate is accelerating. The brands winning in this environment aren’t necessarily the ones with the most traffic — they’re the ones being cited, quoted, and referenced.

What is Generative Engine Optimization (GEO)?

GEO is the practice of optimizing content so that it is more likely to be included, summarized, and cited by AI models. Unlike traditional SEO, which focuses on keyword density and backlinks, GEO focuses on entity relationships, technical clarity, and direct value.

The term was introduced in academic research from Princeton, Georgia Tech, The Allen Institute for AI, and IIT Delhi in their 2023 paper “GEO: Generative Engine Optimization”, which demonstrated that specific content modifications could increase content visibility in generative engine responses by up to 40%.

Key Components of GEO:

- Entity-Based Content: LLMs understand the world through “entities” (people, places, things, concepts). Your content should clearly define its relationship to relevant entities. “TheBomb® is a web design agency based in Vernon, BC, Canada” is more useful to an LLM than “we make great websites.”

- Citation-Ready Structure: Break your content into digestible facts and statistics. Use clear headings and bullet points that make it easy for an AI to extract and cite specific claims.

- Direct Answer Capability: If a user asks a question, your content should provide a concise, factual answer in the first paragraph — not buried on page 3 of a long-winded introduction.

- Source Attribution: Link to authoritative sources for every factual claim. AI models weight content higher when it demonstrates awareness of the broader information ecosystem.

How AI Models Decide What to Cite

Understanding why an AI cites one source over another is the core competency of GEO. Based on publicly available research and hands-on testing, here are the primary factors:

1. Training Data Presence (Brand Mentions)

LLMs are trained on massive datasets scraped from the web — Common Crawl, Wikipedia, Reddit, GitHub, news archives, and more. A brand that is mentioned frequently across authoritative, high-traffic domains is more likely to be “known” to the model.

This is why traditional PR still matters in 2026 — but with a new purpose. A feature in a Canadian business publication, a Reddit thread discussing your product, or a Wikipedia citation aren’t just traffic drivers anymore. They’re model-training-data injections. They make you real to the AI.

Action: Pursue earned media placements, product mentions in relevant communities (Reddit, industry forums, LinkedIn), and any Wikipedia-eligible citations. These shape what LLMs “know” about you.

2. Passage-Level Citability

Modern RAG (Retrieval-Augmented Generation) systems — which power tools like Perplexity, ChatGPT with web search, and Google’s AI Overviews — retrieve specific passages from your content rather than entire pages. A single, well-formed paragraph that directly answers a common question is worth more than ten paragraphs of vague, keyword-stuffed prose.

Action: Write every H2 section as if it could stand alone as a cited answer in an AI response. Ask yourself: “If someone asked [question], could this paragraph be the direct answer?” If yes, you have citation-ready content.

3. Schema Markup as Machine-Readable Context

Schema.org markup is the clearest signal you can give an AI about what your content means. When you mark up an FAQ, Google and AI systems can extract each question-answer pair explicitly. When you use Article schema with an author entity pointing to a known Person, you signal expertise and authorship.

The Google Structured Data documentation confirms that JSON-LD is the preferred format. At TheBomb®, we implement full structured data across all client sites: LocalBusiness, BreadcrumbList, FAQPage, Article, Person, and Service schemas are standard.

4. E-E-A-T: The Trust Layer

Google’s Search Quality Rater Guidelines define E-E-A-T: Experience, Expertise, Authoritativeness, and Trustworthiness. In 2025, Google extended E-E-A-T requirements to virtually all competitive queries — not just YMYL (Your Money or Your Life) topics.

What this means practically:

- Experience: Demonstrate first-hand experience with the topic. “I’ve built 100+ Astro sites and here’s what I learned” is valued over “experts recommend using Astro.”

- Expertise: Author credentials should be visible and machine-readable (via

Personschema linked from yourArticleschema). - Authoritativeness: External mentions and citations from recognized sources.

- Trustworthiness: HTTPS, clear contact information, privacy policy, author bios, and accurate sourced claims.

AI models are increasingly tuned to filter out generic, AI-generated “slop.” The irony is that to rank well in AI search, you need deeply human, experience-driven content.

Traditional SEO vs. LLM SEO: A Comparison

| Feature | Traditional SEO | LLM SEO (GEO) |

|---|---|---|

| Primary Goal | Clicks & Traffic | Mentions & Citations |

| Key Metric | Keyword Ranking | Inclusion Rate in AI Answers |

| Content Focus | Long-form, high density | Fact-dense, structured |

| Authority | Backlink Profile | Cross-platform Brand Presence |

| Technical Signal | Meta tags, sitemap | Schema, llms.txt, structured data |

| Author Signal | Optional | Essential (E-E-A-T) |

How to Rank Higher in AI Overviews and Answer Engines

To stay visible in 2026, you need a hybrid strategy that satisfies both Google’s traditional crawler and the modern LLM.

1. Optimize for “Brand Mentions” and Citations

LLMs are trained on massive datasets. They prioritize brands that are mentioned frequently across authoritative sites (Reddit, Wikipedia, niche forums, high-authority news).

- Action: Focus on PR and guest posting on high-authority domains to increase your brand’s presence in the LLM’s training data.

2. Implement Semantic Schema Markup

Schema.org markup is more important than ever. It provides the “DNA” of your page to the AI, allowing it to understand the context of your data without having to guess.

- Action: Use JSON-LD to define your organization, authors, and specific content types (e.g.,

HowTo,FAQ,Article). All TheBomb® sites ship with comprehensive schema by default.

3. The “Helpful Content” Factor (E-E-A-T)

Google’s E-E-A-T guidelines are now the benchmark for AI search as well. AI models are increasingly tuned to filter out generic, AI-generated “slop.”

- Action: Add unique insights, personal anecdotes, and original data that an AI cannot replicate. If your content could have been written by anyone, it’s not differentiated enough.

4. Optimize for Conversational Queries

People don’t search “best seo tools” anymore; they ask, “What are the best SEO tools for a small boutique agency in 2026?”

- Action: Use long-tail, conversational keywords in your H2 and H3 tags. Think about the full question a person would ask a human advisor, and structure your content to answer it completely.

5. Add an llms.txt File

Similar to robots.txt for web crawlers, llms.txt is an emerging standard that allows site owners to provide a structured summary of their site for LLM indexers. The llms.txt specification proposes a simple markdown file at your domain root that explains your site’s purpose, key pages, and content focus.

While not yet universally adopted, deploying llms.txt now positions you as a forward-thinking source when AI crawlers do prioritize it — and signals to tools like Claude and Perplexity that your content is intentionally AI-accessible.

6. Allow AI Crawlers in robots.txt

Many sites accidentally block AI crawlers via aggressive robots.txt rules. If you want to be cited, you need to be readable. Ensure GPTBot, ClaudeBot, and PerplexityBot are permitted in your robots.txt.

User-agent: GPTBot

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: PerplexityBot

Allow: /Practical Checklist for your 2026 Content Strategy

- Fact-Check Everything: LLMs are prone to hallucinations; if your data is wrong, they will eventually stop citing you. Every statistic should link to its source.

- Simplify Language: AI models prefer clear, active voice. Avoid overly flowery language and jargon.

- Use High-Resolution Media: While LLMs analyze text, multi-modal models (like GPT-4o) also look at images and videos for context. Alt text is not optional.

- Be the Source: Stop rewriting what already exists. Publish original research, case studies, and unique data that no one else has.

- Author Bio Pages: Create dedicated author pages with

Personschema. Link them from every article you publish. - Add

llms.txt: Deploy this at your domain root with a clear description of your site and its key pages. - Internal Link Aggressively: AI systems understand topic clusters through internal link structure. Every piece of content should link to related content on your site.

The Canadian Business Angle

For Canadian SMBs, GEO represents a significant opportunity. Most local competitors are not thinking about AI citation strategy. They’re still chasing traditional rankings.

We’ve found that Canadian local queries — “best web designer Vernon BC,” “Kelowna SEO agency,” “plumber near me Armstrong” — are increasingly being answered by AI Overviews that pull from a small number of well-structured local business sites. The barrier to AI citation in these markets is much lower than in global competitive niches.

If you have a clean technical foundation, proper schema, E-E-A-T signals, and original content, you can be the go-to source that AI systems cite for your category in your market — often within 60-90 days.

Conclusion

The era of traditional SEO isn’t dead, but it has evolved. By embracing Generative Engine Optimization and focusing on becoming a cited source for AI models, you can ensure your brand remains relevant in an “Answer-First” world.

The strategies are not radically different from what good SEO has always required: be authoritative, be accurate, be structured, and be useful. What has changed is the mechanism of reward. Instead of a higher blue-link ranking, the reward is a mention inside the answer that thousands of users read — without ever needing to click.

The goal is no longer just to be found — it’s to be quoted.

Need help navigating the future of search? Contact The Bomb Strategy Team to audit your GEO readiness.